On the four ingredients that made 2022 the AI moment, the interface nobody talks about, and a way of thinking about technological change that you can use for the rest of your career.

A model nobody cared about

In June of 2020, OpenAI released GPT-3. It was, at the time, the largest language model ever built — 175 billion parameters, trained on 45 terabytes of text, capable of writing essays, answering questions, generating code, and producing prose that was, to many readers, indistinguishable from human writing. The technical press covered it with a mix of awe and anxiety. Researchers called it a breakthrough. Sam Altman, OpenAI’s CEO, publicly warned people not to overhype it.

And then, for about two and a half years, almost nobody outside of the AI research community used it.

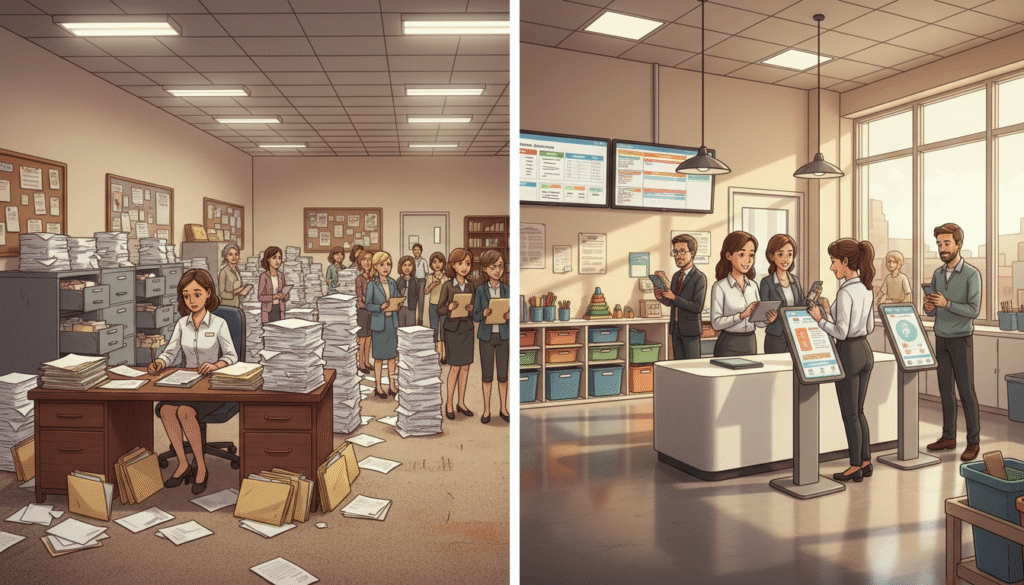

GPT-3 was available through an API — a programmer’s interface that required you to write code to interact with the model. If you were a developer, you could build applications on top of it. If you were a researcher, you could run experiments with it. If you were a normal person who wanted to ask it a question, you couldn’t. There was no place to type. There was no chat window. There was no “talk to GPT-3” button anywhere on the internet. The most powerful language model in the world was sitting behind a developer console, waiting for someone to build a front door.

On November 30, 2022, OpenAI built the front door. They called it ChatGPT. Within five days, it had a million users. Within two months, it had a hundred million — making it the fastest-growing consumer application in the history of the internet. The technology that had been sitting quietly for two and a half years became, overnight, the most talked-about product on Earth.

Here is the question I want to spend this post answering, because the answer teaches you something that goes far beyond AI: why did that particular tool, in that particular moment, work?

The short answer is that November 30, 2022 wasn’t a single breakthrough. It was a confluence — four ingredients arriving at the same table, finally in the right amounts, at the right time. And none of them, alone, would have been enough.

Continue reading Why GPT-3 Sat for Two Years Before the World Noticed